|

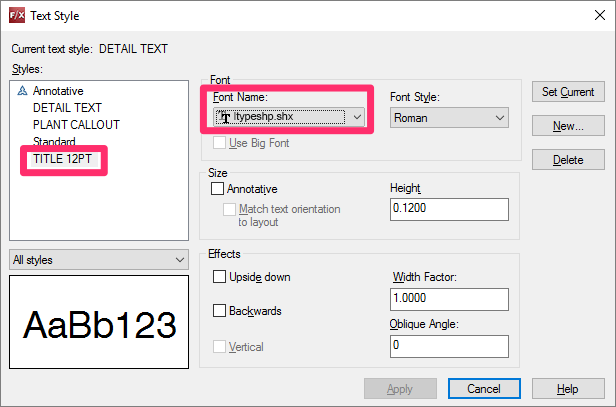

10/20/2021 0 Comments Upside Down Text Autocad For Mac 2018

The point that you specified at the prompt is also stored as the insertion point of the text.If the TEXTED system variable is set to 1, text created using TEXT displays the Edit Text dialog box. While you are in the TEXT command:Here are the steps to generate and use Star Symbols text: Step 1: Just enter the text from the keyboard on textbox under 'Input your text here'. Step 2: Now it provides you with fancy style Star Symbols Text. Step 3: Copy and paste Star Symbols. Source: emojistock.com/star-symbol-emojis/.It no longer creates upsidedown text.Image/sensor noise: Sensor noise from a handheld camera is typically higher than that of a traditional scanner. I’ve included a summarized version of the natural scene text detection challenges described by Celine Mancas-Thillou and Bernard Gosselin in their excellent 2017 paper, Natural Scene Text Understanding below: An example of such a heuristic-based text detector can be seen in my previous blog post on Detecting machine-readable zones in passport images.Natural scene text detection is different though — and much more challenging.Due to the proliferation of cheap digital cameras, and not to mention the fact that nearly every smartphone now has a camera, we need to be highly concerned with the conditions the image was captured under — and furthermore, what assumptions we can and cannot make. Press Tab or Shift+Tab to move forward and back between the sets of single-line text Figure 1: Examples of natural scene images where text detection is challenging due to lighting conditions, image quality, and non-planar objects (Figure 1 of Mancas-Thillou and Gosselin).Detecting text in constrained, controlled environments can typically be accomplished by using heuristic-based approaches, such as exploiting gradient information or the fact that text is typically grouped into paragraphs and characters appear on a straight line. Press Alt and click a text object to edit a set of text linesOnce you leave the TEXT command, these actions are no longer available.If TEXT was the last command entered, pressing Enter at the Specify Start Point of Text prompt skips the prompts for paper height and rotation angle.Resolution: Not all cameras are created equal — we may be dealing with cameras with sub-par resolution. It may be near dark, the flash on the camera may be on, or the sun may be shining brightly, saturating the entire image. Lighting conditions: We cannot make any assumptions regarding our lighting conditions in natural scene images. Blurring: Uncontrolled environments tend to have blur, especially if the end user is utilizing a smartphone that does not have some form of stabilization. Viewing angles: Natural scene text can naturally have viewing angles that are not parallel to the text, making the text harder to recognize.

Project structureTo start, be sure to grab the source code + images to today’s post by visiting the “Downloads” section. Unknown layout: We cannot use any a priori information to give our algorithms “clues” as to where the text resides.As we’ll learn, OpenCV’s text detector implementation of EAST is quite robust, capable of localizing text even when it’s blurred, reflective, or partially obscured:Figure 3: The structure of the EAST text detection Fully Convolutional Network (Figure 3 of Zhou et al.).With the release of OpenCV 3.4.2 and OpenCV 4, we can now use a deep learning-based text detector called EAST, which is based on Zhou et al.’s 2017 paper, EAST: An Efficient and Accurate Scene Text Detector.We call the algorithm “EAST” because it’s an: Efficient and Accurate Scene Text detection pipeline.The EAST pipeline is capable of predicting words and lines of text at arbitrary orientations on 720p images, and furthermore, can run at 13 FPS, according to the authors.Perhaps most importantly, since the deep learning model is end-to-end, it is possible to sidestep computationally expensive sub-algorithms that other text detectors typically apply, including candidate aggregation and word partitioning.To build and train such a deep learning model, the EAST method utilizes novel, carefully designed loss functions.For more details on EAST, including architecture design and training methods, be sure to refer to the publication by the authors. We need to be able to handle such use cases. While humans may still be able to easily “detect” and read the text, our algorithms will struggle. Non-planar objects: Consider what happens when you wrap text around a bottle — the text on the surface becomes distorted and deformed. Text in natural scenes may be reflective, including logos, signs, etc. Upside Down Text Autocad 2018 Free To ShareIf you have any improvements to the method please do feel free to share them in the comments below. The NMSBoxes function may work in OpenCV 3.4.2 but I wasn’t able to exhaustively test it.I got around this issue my using my own non-maxima suppression implementation in imutils, but again, I don’t believe these two are 100% interchangeable as it appears NMSBoxes accepts additional parameters.Given all that, I’ve tried my best to provide you with the best OpenCV text detection implementation I could, using the working functions and resources I had. The C++ implementation can produce rotated bounding boxes, but unfortunately the one I am sharing with you today cannot.Secondly, the NMSBoxes function does not return any values for the Python bindings (at least for my OpenCV 4 pre-release install), ultimately resulting in OpenCV throwing an error. text_detection_video.py : Detects text via your webcam or input video files.Both scripts make use of the serialized EAST model ( frozen_east_text_detection.pb) provided for your convenience in the “Downloads.” Implementation notesThe text detection implementation I am including today is based on OpenCV’s official C++ example however, I must admit that I had a bit of trouble when converting it to Python.To start, there are no Point2f and RotatedRect functions in Python, and because of this, I could not 100% mimic the C++ implementation. text_detection.py : Detects text in static images. You may wish to add your own images collected with your smartphone or ones you find online. Steam for mac os x snow leopard-width : Resized image width — must be multiple of 32. Optional with default=0.5. -min-confidence : Probability threshold to determine text. -east : The EAST scene text detector model file path. We then proceed to parse five command line arguments on Lines 9-20: Notably we import NumPy, OpenCV, and my implementation of non_max_suppression from imutils.object_detection. Important: The EAST text requires that your input image dimensions be multiples of 32, so if you choose to adjust your -width and -height values, make sure they are multiples of 32!From there, let’s load our image and resize it: # load the input image and grab the image dimensions# set the new width and height and then determine the ratio in change(newW, newH) = (args, args)# resize the image and grab the new image dimensionsOn Lines 23 and 24, we load and copy our input image.From there, Lines 30 and 31 determine the ratio of the original image dimensions to new image dimensions (based on the command line argument provided for -width and -height ).Then we resize the image, ignoring aspect ratio ( Line 34).In order to perform text detection using OpenCV and the EAST deep learning model, we need to extract the output feature maps of two layers: # define the two output layer names for the EAST detector model that# we are interested - the first is the output probabilities and the# second can be used to derive the bounding box coordinates of textWe construct a list of layerNames on Lines 40-42: Optional with default=320. -height : Resized image height — must be multiple of 32. The second layer is the output feature map that represents the “geometry” of the image — we’ll be able to use this geometry to derive the bounding box coordinates of the text in the input imageLet’s load the OpenCV’s EAST text detector: # load the pre-trained EAST text detectorPrint(" loading EAST text detector.")# construct a blob from the image and then perform a forward pass of# the model to obtain the two output layer setsBlob = cv2.dnn.blobFromImage(image, 1.0, (W, H),(123.68, 116.78, 103.

0 Comments

Leave a Reply. |

AuthorNancy ArchivesCategories |

RSS Feed

RSS Feed